There's a running conversation on twitter about the definition of "integration testing" and also the notion of "integration" when it comes to software or just systems as a whole. I think that understanding the concept behind integration in terms of software systems will give us clues on how to define the what's and how's of testing from an integration perspective .

Good old Wikipedia defines "System Integration" as:

Looking at the idea of linking things together brings the notion of biological relationships to mind. First, uni-directional linking. This is very similar to the idea of Symbiotic Commensalism which states,

The second type of linking is bi-directional. This reminds me of Symbiotic Mutualism. The "hope" in this type of integration is bringing mutual benefits to both systems that are being integrated. The closest thing that comes to mind is a user profile system that feeds into a marketing system which in turn provides suggestions against a given product based on a distinct set of user choices.

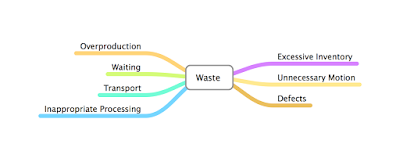

Just like any relationship that's defined above, there are attributes in any given relationship that can cause for a type of relationship to switch. In the case of software, this usually comes in the form of bug. Whether a bug is intended that will lead to your data being stolen for nefarious purposes (Symbiotic Parasitism) or a bug that eventually leads to the demise of another system in the form of a backdoor hack where a payload can delete everything (Symbiotic Amensalism).

"perceived risks"

Good old Wikipedia defines "System Integration" as:

In information technology, systems integration[2] is the process of linking together different computing systems and software applications physically or functionally,[3] to act as a coordinated whole."linking together"

Looking at the idea of linking things together brings the notion of biological relationships to mind. First, uni-directional linking. This is very similar to the idea of Symbiotic Commensalism which states,

a class of relationships between two organisms where one organism benefits from the other without affecting it.Consider a library that you use as part of building a specific functionality on your site. The methods or classes from that library gives you the benefit of not needing to write your own. The library remains unchanged and is not affected by how you use that library. Though this definition of a commensal relationship is somewhat shallow because there is a point where a given library starts to be problematic because of bugs in that library that will then eventually affect the system that you are integrating this library into.

The second type of linking is bi-directional. This reminds me of Symbiotic Mutualism. The "hope" in this type of integration is bringing mutual benefits to both systems that are being integrated. The closest thing that comes to mind is a user profile system that feeds into a marketing system which in turn provides suggestions against a given product based on a distinct set of user choices.

Just like any relationship that's defined above, there are attributes in any given relationship that can cause for a type of relationship to switch. In the case of software, this usually comes in the form of bug. Whether a bug is intended that will lead to your data being stolen for nefarious purposes (Symbiotic Parasitism) or a bug that eventually leads to the demise of another system in the form of a backdoor hack where a payload can delete everything (Symbiotic Amensalism).

"perceived risks"

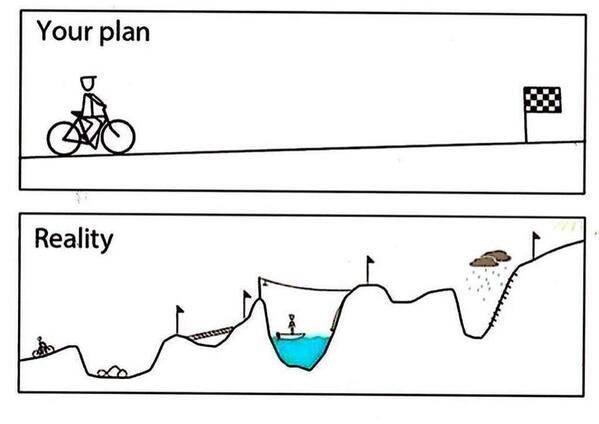

So how do we test for integration risks? Testing for the functionality, behavior and purpose of the resulting coordinated whole will give you one part of the story that you are trying to write. Understanding the key dependency points and data hand-offs between both systems is key. But that's not all, you also need to be able to tell the story of how you are going to test.

In 2010, Michael Bolton wrote a blog post on Test Framing, which became a set of lenses that I "wear" whenever I need to design tests around a given feature or product. Simply said, in order for you to test (anything) you need to be able to tell two parallel stories: The story of the product and the story for testing.

Just like testing any product, integration testing is just a variation in the mission of your testing. Your ideas and awareness of the moving parts will hopefully inform you of the techniques that you can use so you get to the most important problems that will lead to risks in the least amount of time.